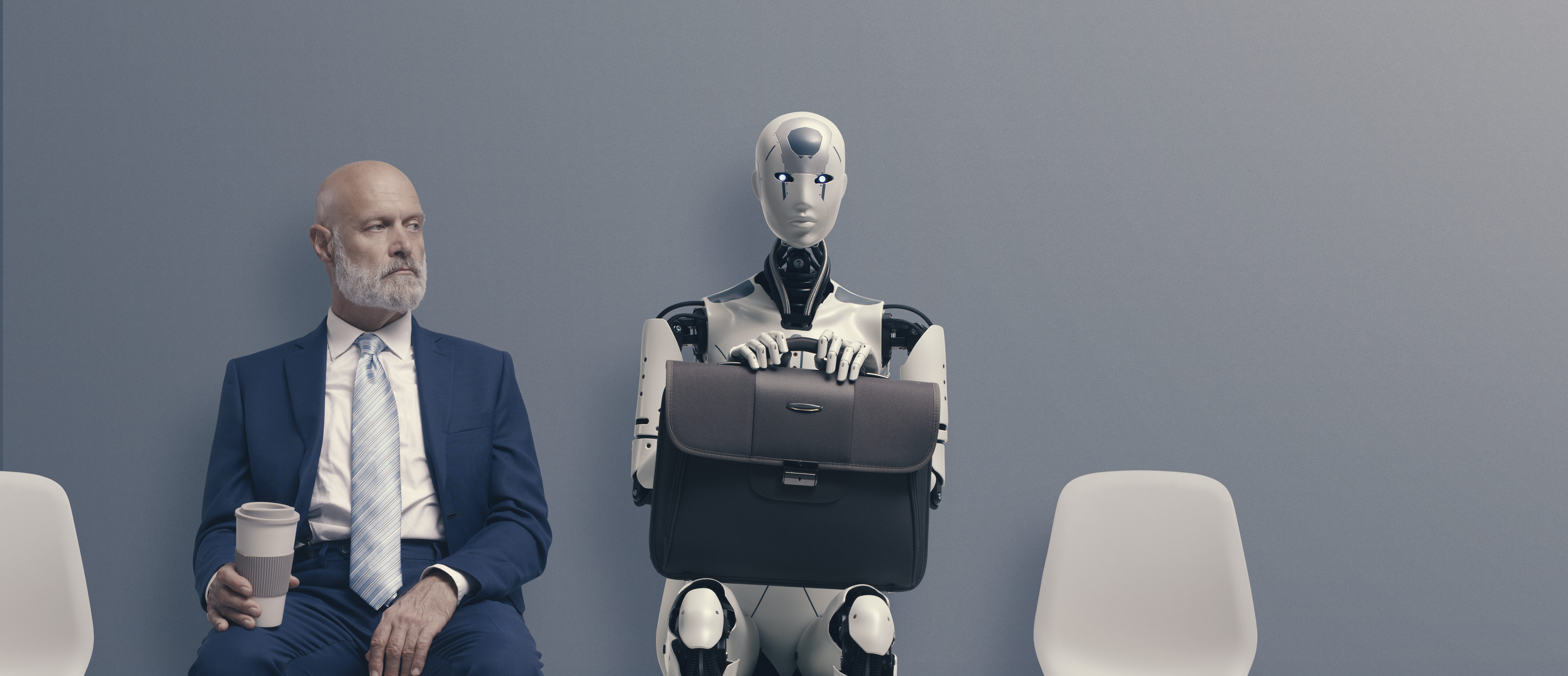

Anthropic recently published a new constitution for ‘Claude’, their Gen AI model. The AI is designed for natural conversation, complex reasoning, coding, analysis, and content creation. Anthropic was founded with the aim of creating AI with a focus on safety and ethics. An ambition shared in the health and care sectors, where safety is a paramount concern given the huge responsibility communities entrust in their care and support providers. Throughout their technology’s iterations Anthropic repeatedly look to connect their functionality to human values. This approach resonates with us at Nourish, where we firmly root our AI models in lived experience by always keeping a ‘human in the loop’ throughout the design process. We believe principals are essential for applied AI, as we continue to search for the most impactful and effective applications of AI in health and care technology.

Our director of Data and AI, Sudha Regmi, saw several parallels between Claude’s new constitution. Here are her three key takeaways from the recent announcement.

Principles are more important than rules

“When you’re raising kids, you can’t just hand them a list of rules and hope it covers every weird eventuality life throws at them,” explained Sudha. “You try to teach values and principles, then trust they’ll generalise when it matters.”

Anthropic is basically saying the same thing. No, not that all GenAI is inherently attention seeking and impulse driven. (That’s just a good portion of the user base). They are saying, don’t just tell the model what to do, teach it why with context. This gives the model the ability to generalise across novel situations. With ‘rules’ in place, GenAI can become confused by edge cases. With ‘principals’ the model is better designed to understand context and infer the correct decision.

We need to move past the ‘it was trained on the internet so every model is the same’ myth for Applied AI

“This is an understandable but misleading hangover from early AI scepticism. Pretraining is very important for AI models, but is not the be all and end all, especially for applied AI. Post-training is also very important. Along with feedback loops, policy layers and product choices. When choosing how to apply AI models to modern challenges, there is no predictable answer. We must, to borrow a technique from social care, review our impact, continuously improve and remain connected to lived experience.”

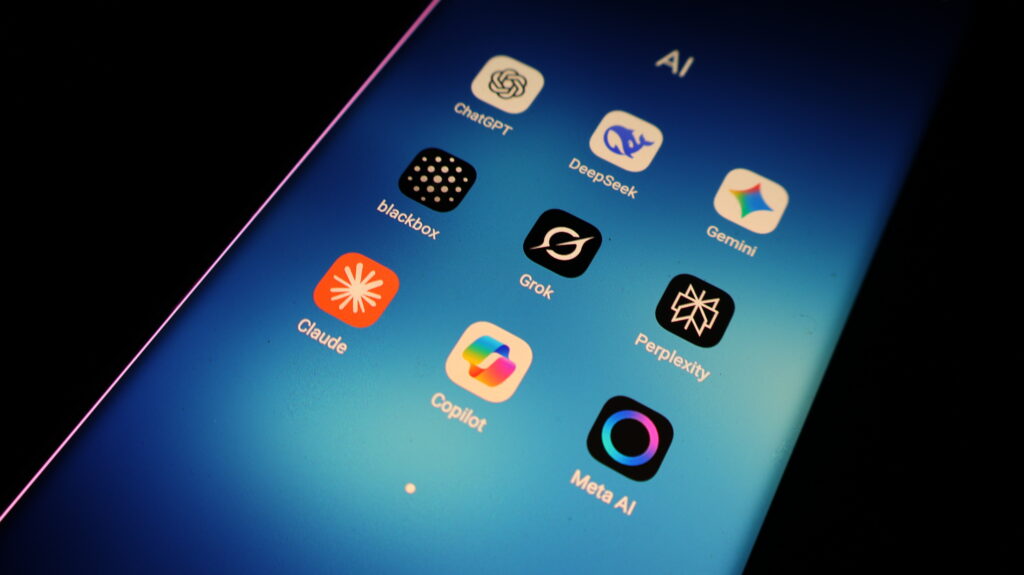

No two experiences are exactly the same. You can see this in the real world with “the same base model” behaving differently depending on where you use it. Put the same prompt into different models and look at the output. These differences express themselves across both different models and different iterations. For example, think about the difference in output when you have Copilot (in gpt5 mode), ChatGPT or OpenAI GPT models doing the same tasks via APIs. Or the differences when using Claude Sonnet in Claude Code vs Claude Cursor.

There are vast differences in models that share similar origin stories. Applied AI will continue to develop in a huge variety of fields. We must be able to more deeply understand the context of the model, before we can effectively teach the model the context of its application.

This isn’t academic, it has an impact on your product!

“The constitution is explicit about priority order: safe → ethical → compliant → helpful. It includes ‘hard constraints’ for especially high-stakes work. It validates that the process of building AI is just as important as the product.”

The process of building applied AI is crucial. Principles are deliberate, and they can’t just live on a slide deck. They must be represented in your approach to AI design, including:

Incentivising the right kind of outputs for the context

Health and care are not vibes-based domains. Decision carry a huge amount of weight and privacy. Context is essential for understanding both best practice and the reality of the responsibility.

Human-in-the-loop validation with clinical experts

Lived experience remains the alpha and the omega for technology design. Without human input into the design process, AI can never be considered responsibly designed.

Tight feedback loops with our customers to pressure-test what “good” looks like in practice

That’s what breeds confidence in applied AI. Not reverential ‘this is what the model said to do’. Not black box decision makers. But results driven by a combination of principles, process and validation.

Continuing on from Claude’s constitution

If you’re building with AI right now or even thinking about adopting AI tools, we’d recommend reading the constitution. Even if you disagree with parts of it. It’s a useful window into how one lab is translating “alignment” into something operational.

If you want to know more about Nourish Care’s approach to applied AI for health, care and support, click here.