We hosted our AI and Data Conference at Bletchley Park this January. The historic backdrop inspired us to look to the future potential of AI and data in social care. Over the course of the day we heard for customer panels, product managers and Nourish users on and off stage. It was an inspiring event that energised all attendees for the year ahead!

The day started once everyone had settled in to the historic venue. Our Chief Product Officer Matthew Stewart opened the proceedings with an overview of our position on AI, our ambitions for care intelligence, and the odd season joke here and there. This was followed by our Director of AI & Data Sudha Regmi detailing the Nourish view into Analytics, Insights and Nourish AI.

We had two panels with customers, one on either side of lunch. The first panel, led by our Chief Customer Officer Paul Barnes was focussed on Nourish Analytics. Paul was joined by Emma Lindblom, Head of Quality Improvement at MHA, Gareth Williams, Flexible Workforce Manager at Brandon Trust and Benjamin Winfield the Product Owner Lead for Quality and Business Systems at Lifeways Group. They built upon Sudha’s point about moving descriptive to prescriptive with Analytics and the journey of embedding the functionality in an organisation.

Mark Gray, Nourish AI Product Lead, hosted our second panel. It focussed on our new AI platform Nourish Confidence and it’s co-production journey. Our Clinical and Safety Lead Carrie Taylor and Emma Brazier, Business Analyst for Sanctuary Supported Living joined him on the panel. They discussed our journey to finding the right application for AI. As well as how we can change the ways of approaching audits to drive continuous improvement for people with support in addition to how we can bring together different functionality to provide holistic, truly person centred care and support.

Over lunch attendees were treated to a tour of the venue. Getting to take in the history and groundbreaking work that took place in the birthplace of AI.

The best discussions happened in between all of the sessions. We took the opportunity to display many of the new platforms and features of the Nourish ecosystem. Talking to people from across the wide range of care and support services represented by our attendees is always the highlight of these events. We closed on a discussion of our roadmap. And our Chief Technology Officer Jamie Hibbard making a special announcement about our AI Labs!

We are incredibly grateful to everyone who joined us in Bletchley Park. Some of our attendees even jumped on camera with us to share their experiences and perspectives. You can see these Nourish users in the video, and look out for longer versions of their interviews on our social media!

Ozayr Patel, Development Manager, Lancashire County Council

Steve Bowler, Digital Transformation and Implementation Manager, Greensleeves Care

Sian Smail, Health and Social Care Data Analyst, Care Dorset UK

Elliot Goodwin, Area Director, Consensus Support Services

Alicia Ingham, Operational System Improvement Lead, MHA

Jay Harper, Head of Communications and Projects, Rehability UK

Jane Hayden, Head of Technology, Treloar’s

Ross Watson, Senior Product Manager, HC-One

Gemma Pitman-McGrath, Clinical Development Nurse, Barchester

Steve Daniels, Operational Change Lead, iVolve Care & Support

We are so excited for the future of AI & data in health, care and support technology. Find out why on our Nourish AI page.

Anthropic recently published a new constitution for ‘Claude’, their Gen AI model. The AI is designed for natural conversation, complex reasoning, coding, analysis, and content creation. Anthropic was founded with the aim of creating AI with a focus on safety and ethics. An ambition shared in the health and care sectors, where safety is a paramount concern given the huge responsibility communities entrust in their care and support providers. Throughout their technology’s iterations Anthropic repeatedly look to connect their functionality to human values. This approach resonates with us at Nourish, where we firmly root our AI models in lived experience by always keeping a ‘human in the loop’ throughout the design process. We believe principals are essential for applied AI, as we continue to search for the most impactful and effective applications of AI in health and care technology.

Our director of Data and AI, Sudha Regmi, saw several parallels between Claude’s new constitution. Here are her three key takeaways from the recent announcement.

“When you’re raising kids, you can’t just hand them a list of rules and hope it covers every weird eventuality life throws at them,” explained Sudha. “You try to teach values and principles, then trust they’ll generalise when it matters.”

Anthropic is basically saying the same thing. No, not that all GenAI is inherently attention seeking and impulse driven. (That’s just a good portion of the user base). They are saying, don’t just tell the model what to do, teach it why with context. This gives the model the ability to generalise across novel situations. With ‘rules’ in place, GenAI can become confused by edge cases. With ‘principals’ the model is better designed to understand context and infer the correct decision.

“This is an understandable but misleading hangover from early AI scepticism. Pretraining is very important for AI models, but is not the be all and end all, especially for applied AI. Post-training is also very important. Along with feedback loops, policy layers and product choices. When choosing how to apply AI models to modern challenges, there is no predictable answer. We must, to borrow a technique from social care, review our impact, continuously improve and remain connected to lived experience.”

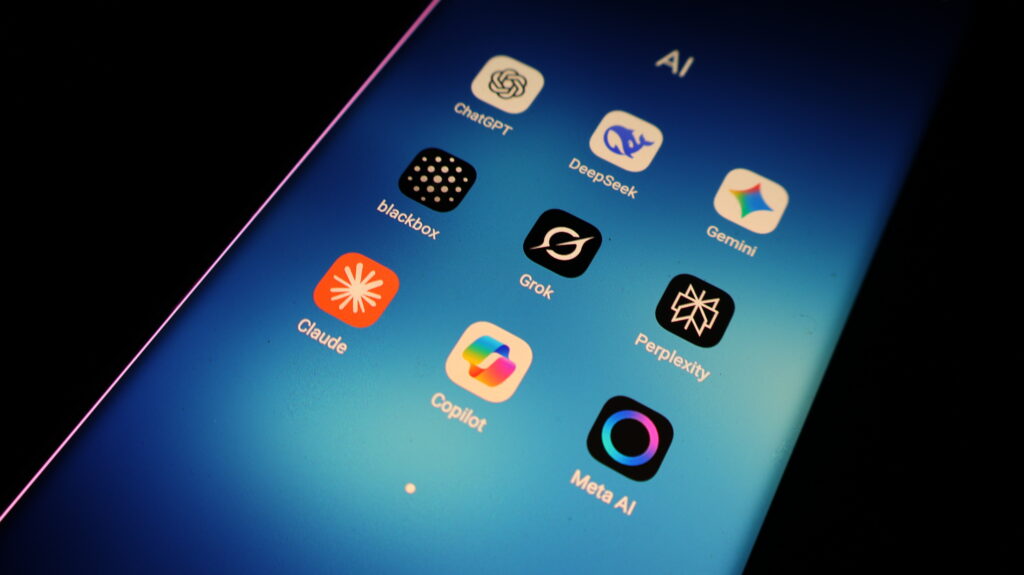

No two experiences are exactly the same. You can see this in the real world with “the same base model” behaving differently depending on where you use it. Put the same prompt into different models and look at the output. These differences express themselves across both different models and different iterations. For example, think about the difference in output when you have Copilot (in gpt5 mode), ChatGPT or OpenAI GPT models doing the same tasks via APIs. Or the differences when using Claude Sonnet in Claude Code vs Claude Cursor.

There are vast differences in models that share similar origin stories. Applied AI will continue to develop in a huge variety of fields. We must be able to more deeply understand the context of the model, before we can effectively teach the model the context of its application.

“The constitution is explicit about priority order: safe → ethical → compliant → helpful. It includes ‘hard constraints’ for especially high-stakes work. It validates that the process of building AI is just as important as the product.”

The process of building applied AI is crucial. Principles are deliberate, and they can’t just live on a slide deck. They must be represented in your approach to AI design, including:

Incentivising the right kind of outputs for the context

Health and care are not vibes-based domains. Decision carry a huge amount of weight and privacy. Context is essential for understanding both best practice and the reality of the responsibility.

Human-in-the-loop validation with clinical experts

Lived experience remains the alpha and the omega for technology design. Without human input into the design process, AI can never be considered responsibly designed.

Tight feedback loops with our customers to pressure-test what “good” looks like in practice

That’s what breeds confidence in applied AI. Not reverential ‘this is what the model said to do’. Not black box decision makers. But results driven by a combination of principles, process and validation.

If you’re building with AI right now or even thinking about adopting AI tools, we’d recommend reading the constitution. Even if you disagree with parts of it. It’s a useful window into how one lab is translating “alignment” into something operational.

If you want to know more about Nourish Care’s approach to applied AI for health, care and support, click here.

Artificial intelligence (AI) has shifted from a niche topic in tech circles to a headline conversation across health and care over the past couple of years. What was once the preserve of data scientists and software engineers is now discussed in care home corridors, home care offices, and even over the dinner table! But while the hype is loud, the reality for social care is more nuanced, filled with both opportunity and the responsibility to get it right. Join us as we explore the reality and potential of AI in social care.

Much of the buzz stems from Generative AI (GenAI). Tools like ChatGPT and Microsoft Copilot that create new content like text or images. These have made AI accessible to anyone, even those with no technical background. This accessibility has sparked imagination and curiosity across the care sector. Care leaders are starting to ask, “What can AI do for us?”

However, the reality is that large-scale return on investment (ROI) for AI in social care hasn’t been fully realised yet. While the tech industry is racing ahead, the challenge for our sector is not to chase AI for its novelty. But to apply it deliberately to real business and care problems.

Two clear paths exist:

For obvious reasons, at Nourish we believe it’s the second path that holds real promise for social care.

At its best, AI offers a way to augment human work, not replace it. In social care, this means easing the administrative load, surfacing critical insights faster, and supporting preventative approaches that improve quality of life for the people we serve.

A useful way to think about this is through the ‘Triple Aim’ framework from US healthcare, which focuses on:

For UK care providers, AI can directly support these aims. For example:

Crucially, this is not about replacing carers with algorithms. It’s about using AI in social care to lift some of the cognitive burden. So that staff can spend more time doing what only humans can. Building relationships and delivering compassionate, intuitive care.

AI depends on data, and in social care, the ongoing shift to digital systems means we now have more data than ever before. Care records, care notes, health metrics, and incident reports all hold valuable insights if we know how to extract them.

Two main AI techniques are particularly relevant:

The most effective approach blends these techniques with expert oversight. A concept known as supervised learning. This ensures the AI’s “understanding” is guided by the experience of clinical professionals and frontline carers. Which in turn ensures the insights it produces are safe, relevant, and trustworthy.

Social care deals with some of the most sensitive data possible, and the wellbeing of real people. That makes Responsible AI not just an ethical choice but a practical necessity.

Responsible AI follows core principles:

This last principle is crucial. In social care, AI should suggest, not act. That is what we mean by augmenting, rather than replacing care. A falls-risk prediction, for example, should prompt a human review and intervention. As opposed to automatically changing a care plan.

This protects against the risks of over-automation. So, providers can ensure that the irreplaceable human qualities of care, empathy, intuition, and contextual judgment, remain at the centre. This is why we build systems that are transparent and auditable. So, we understand why recommendations are given and remain accountable to them.

Responsible AI opens the door to several promising use cases:

These examples share a common goal. Namely: moving from reactive care ‘What happened?’ to proactive and preventative care ‘Why is it happening, and how can we change the outcome?’.

For AI to be embraced in social care, trust must be earned and maintained. This means:

Trust isn’t a one-off achievement. It’s a relationship that must be nurtured through ongoing transparency and collaboration.

The potential of AI in social care is undeniable. Used responsibly, it can improve outcomes, reduce costs, and allow carers to focus more on human connection. But the key word is ‘responsibly’. Rooted in human experience and shaped by the people and communities it supports.

The most effective AI in our sector will come from co-production. Solutions developed hand-in-hand with those who understand the realities of care and support. Both in terms of those who provide care and support, and those who utilise it. This ensures the technology supports the real needs of the sector. Rather than forcing the sector to adapt to the technology.

In the end, AI in social care should not be about replacing human judgment but empowering it. The goal is a future where technology enhances the compassion, skill, and dedication that define our sector. Where AI is the assistant, and people remain firmly in charge.